OpenAI Outshines Leading Chatbots in Challenging Chess Showdowns

OpenAI has demonstrated its superiority in chess challenges by outperforming leading chatbot competitors. Recent tests show that its artificial intelligence models achieve higher accuracy and performance in chess-related tasks, setting a new benchmark for chatbot capabilities in strategic gameplay.

Tl;dr

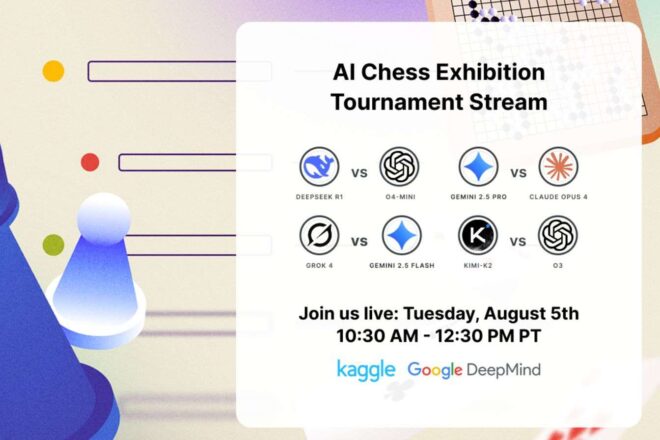

- Chatbots competed in chess on Kaggle Game Arena.

- OpenAI‘s o3 model dominated, beating rivals including Grok 4.

- Specialized machines like Deep Blue still outperform chatbots.

A Digital Chess Tournament Unveils AI Progress

Nearly three decades have passed since Deep Blue, the legendary supercomputer from IBM, famously defeated Garry Kasparov, reshaping perceptions of what machines could achieve. This milestone, enabled by its 32 processors and the ability to analyze some 200 million chess positions every second, set a new standard for specialized computing. It’s a legacy that has spurred significant advances in fields as varied as finance and pharmaceuticals.

Fast-forward to the present: the landscape is evolving again. Instead of purpose-built supercomputers, we now see generalist conversational AI models, familiar to millions in daily life, stepping onto the virtual chessboard. These agents recently squared off in a unique competition organized on the renowned benchmarking platform, Kaggle. Aptly titled the Kaggle Game Arena, this tournament offered an unprecedented opportunity to assess not just raw computational power, but also reasoning and adaptability—traits essential to both chess and modern artificial intelligence.

Showdowns Highlight New AI Contenders

What unfolded was more than just a game. The model o3, designed by OpenAI, proved itself formidable by systematically eliminating challengers—including its own sibling, o4 mini—on the path to victory. The final saw o3 sweep aside Grok 4, representing rival company xAI, in four straight games. Meanwhile, the battle for third place featured a tense match between o4 mini and Gemini 2.5 Pro from Google; after a hard-fought draw, Google’s entry ultimately clinched the spot.

Notably, organizers implemented seeded pairings to ensure favorites didn’t face off prematurely—a move that kept both suspense and fairness intact. Matches were broadcast with a carefully adjusted pace to keep audiences engaged and make the intricacies of AI chess accessible.

Kaggle’s Broader Ambition: Benchmarking AI Intelligence

But what does it all mean? For Kaggle, such contests are far more than mere entertainment. As one organizer put it: « a game like chess provides an unambiguous signal of true AI capabilities ». Here lies the crucial point—these tournaments are designed to probe not only how well chatbots follow instructions, but also their ability for strategic thinking and on-the-fly adaptation.

It must be said—and industry observers have not missed it—that while these general-purpose chatbots perform impressively compared with typical consumer expectations, they remain several steps behind bespoke systems like Deep Blue.

To summarize:

- Kaggle Game Arena underscores remarkable advances in conversational AI models.

- The gap with specialized computing giants remains substantial—at least for now.